The research group at Universidade de Vigo is developing an automated Scan-to-BIM pipeline that transforms raw 3D scans of indoor spaces into structured building information models.

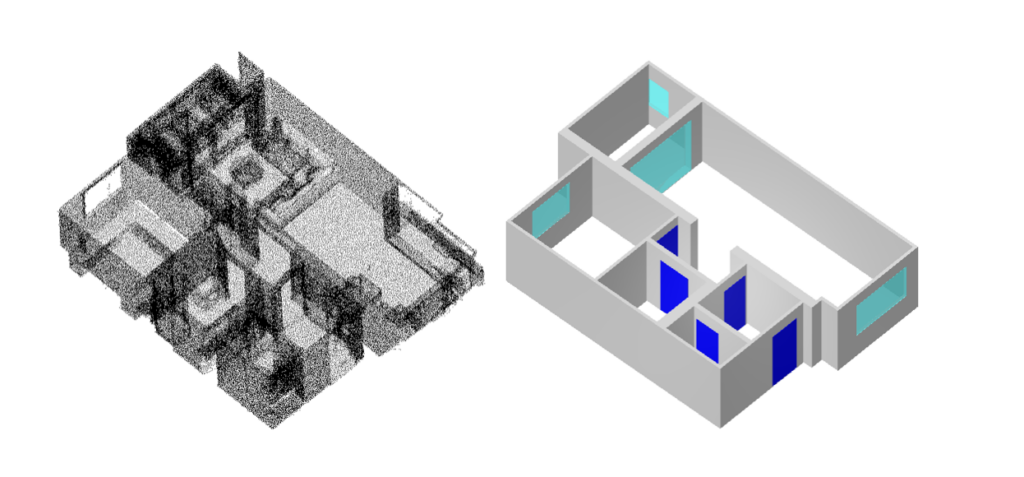

Using the HoloLens 2 mixed reality headset, the team captures point clouds of existing buildings. These point clouds are then processed by a model combining deep learning and a Large Language Model, allowing the system to detect and classify architectural elements such as walls, doors, windows, floors and ceilings.

One of the key contributions of this approach is its multi-inference consensus mechanism. Since LLMs can introduce variability in their outputs, the model is run multiple times and the results are aggregated. This process helps reduce variability and produces more robust and reliable detections.

The final output is a clean, parametric IFC file, the standard format for BIM exchange. This file is ready to be used in any compatible architectural or engineering software.

From the raw point cloud captured on-site with the HoloLens 2 to the exported IFC model visualised in an IFC viewer, this work illustrates the full journey toward structured and usable building information models.